Personally, I think it is desirable to report as many statistics that are relevant to your research question as possible.Īs always I'm not satisfied.

Confidence intervals, effect sizes, and p-values (all of which can be calculatedįrom the test statistics and degrees of freedom) present answers to differentīut related questions. To stop kidding ourselves that statistical inferences based on a single I’m pretty skeptical about the likelihood this will end up being an ‘new’ statistics, and I’ve seen some bright young minds being brainwashed to Psychological Science is pushing confidence intervals as I don’t feel them in the way I feel p-values,īut that might change.

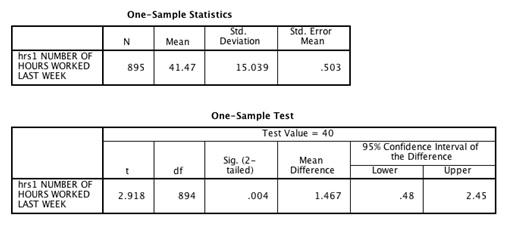

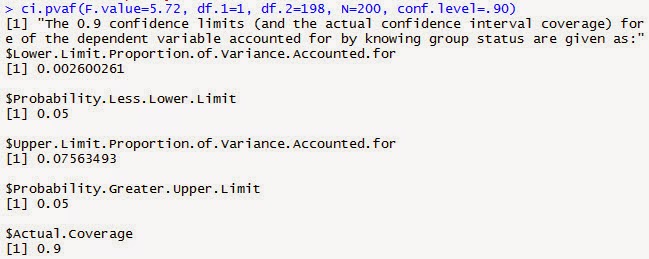

I think you should report confidence intervals becauseĬalculating them is pretty easy and because they tell you something about theĪccuracy with which you have measured what you were interested in. Confidence intervals are confusing intervals. You report such a CI as 90% CI where the XX is the upper limit of the CI. Furthermore, because eta-squared cannot be smaller than zero, a confidence interval for an effect that is not statistically different from 0 (and thus that would normally 'exclude zero') necessarily has to start at 0. This means that a 95% CI around Cohen's d equals a 90% CI around η² for exactly the same test. For a paper by Steiger (2004) that addresses this topic, click here. If you calculate 95% CI, you can get situations where the confidence interval includes 0, but the test reveals a statistical difference with a p <. 08 you get from an F-test by two and reporting p =. This is related to the fact that F-tests are always one-sided (so no, don’t even think about dividing that p =. If you don’t want to read it, you should know that while Cohen’s d can be both positive and negative, r² or η² are squared, and can therefore only be positive. Just change the F-value, confidence level, and the df.1 and df.2.Īgain, Karl Wuensch has gone out of his way to explain this in a very clear document, including examples, which you can find here. The code below will give you the same (at least to 4 digits after the decimal) values as the Smithson script in SPSS. Thankfully, Ken Kelley (who made the MBESS package) helped me out in an e-mail by pointing out you could just use the R Code within the ci.pvaf function and adapt it. This error is correct in between-subjects designs (where the sample size is larger than the degrees of freedom) but this is not true in within-designs (where the sample size is smaller than the degrees of freedom for many of the tests). I found out that for within designs, the MBESS package returns an error. Request it in SPSS (or you can use my effect Regrettably, MBESS doesn’t give partial η², so you need to Here we see the by now familiar lower limit and upper limit